Notes on effective use of coding assistants coming from someone building production software with AI since 2023.

Example of a good prompt to drive an e2e feature development loop

> investigate backend/utils/board.js and clone function. understand what's the data flow when `convertNodesToPositionsAndEmployees` is called. my goal is to make the convert logic part of initial clone so that we don't have 2 actions, where one is just clone and 2nd is convert. think about best way to approach it and plan it. start with unit tests that characterize current behavior and potential edge cases before proceeding

Why is it good?

- references file(s) for context narrowing

- references functions to focus on

- provides minimal context on the transformation needed

- asks to “think” to trigger thinking mode (you can also use “ultrathink” or “think hard”) – claude specific

- asks to plan (forces claude code to prepare step by step process)

- asks to characterize current behavior via tests so that existing logic can be verified

- asks to implement changes after planning and writing tests

Effective pattern to automate writing integration tests

Provide claude/codex with a flow reference and ask to mimic it in a test file. This provides enough rails to make it focus on right endpoints, and thus files and functions. Example:

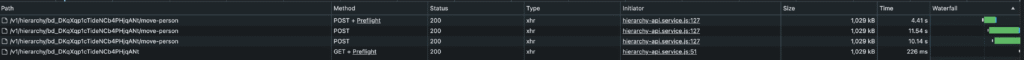

How does it work: start with manual testing and perform the operations that trigger specific flow. Here: triggering move-person related event 3 times and observing GET request afterwards was enough to make the assistant focus on correct paths and be able to mimic that flow in a test characterizing that behavior.

TDD as keyword

It seems like claude understands “TDD” as a keyword to write certain type of tests.

As an example: I wanted to create a test that demonstrates issues with overwriting an optimistic update with server data. claude did it in a way where unit test was more of an orchestrator that demonstrated the result using console.log and passed the test with console log assertion.

I had to fight it a bit until we got to a point where mentioning “tdd principles” made it write a test that actually fails when encountering this kind of issue so that I could iterate on logic updates until the test passes.

Workflows that worked well in early 2025

- map a flow and summarize

- example: summarize login flow and its edge cases in md file

- keep revising md file – “improve by looking at @file.js”

- create 10x better cursor rules

- use multiple LLMs to work out a plan and ask to create a doc to attach in other conversations

- claude code – great at low specificity tasks and digging into the code with loose references

- documentation – writing to md files in the repo to have human reviewed, text documentation

- PR & QA – understand the changes, write a dev note about affected parts

- context is precious – efficiency drops past 50% of context window usage – this is why optimised, dev reviewed, documentation pieces could be useful

- learn to build the minimal context – essential skill prediction: effective reading, reviewing and distilling the ai generated code to minimal viable change

- when you see that the scope is too big for llm, take ideas, reject changes and try to specify smaller piece in the prompt

- use case: understand logs

- use case: tell me if it makes sense and make it 10x better considering… (eg refining an existing prompt)

- documentation links – Give example to work off of (external/internal)

- https://simonwillison.net/2025/Mar/11/using-llms-for-code/

- (claude) “think hard”

- (cursor) refer to @diff to update

- (cursor) refer to @folder but also to specific files for better guidance

Leave a Reply